Autoencoders | Vibepedia

Autoencoders are a class of artificial neural networks designed for unsupervised learning, meaning they learn from data without explicit labels. Their core…

Contents

Overview

The conceptual seeds of autoencoders were sown in the early days of artificial intelligence research, with early forms explored by researchers like Geoffrey Hinton and Yoshua Bengio in the 1980s and 1990s. Early work focused on unsupervised pre-training for deep networks, aiming to initialize weights effectively before supervised fine-tuning. The term 'autoencoder' itself gained traction as the architecture became more formalized, particularly with the development of backpropagation algorithms that allowed for efficient training of multi-layer networks. Initial implementations were often shallow, but the advent of deep learning frameworks and increased computational power in the 2000s and 2010s enabled the creation of much deeper and more complex autoencoder architectures, leading to their widespread adoption in various machine learning tasks.

⚙️ How It Works

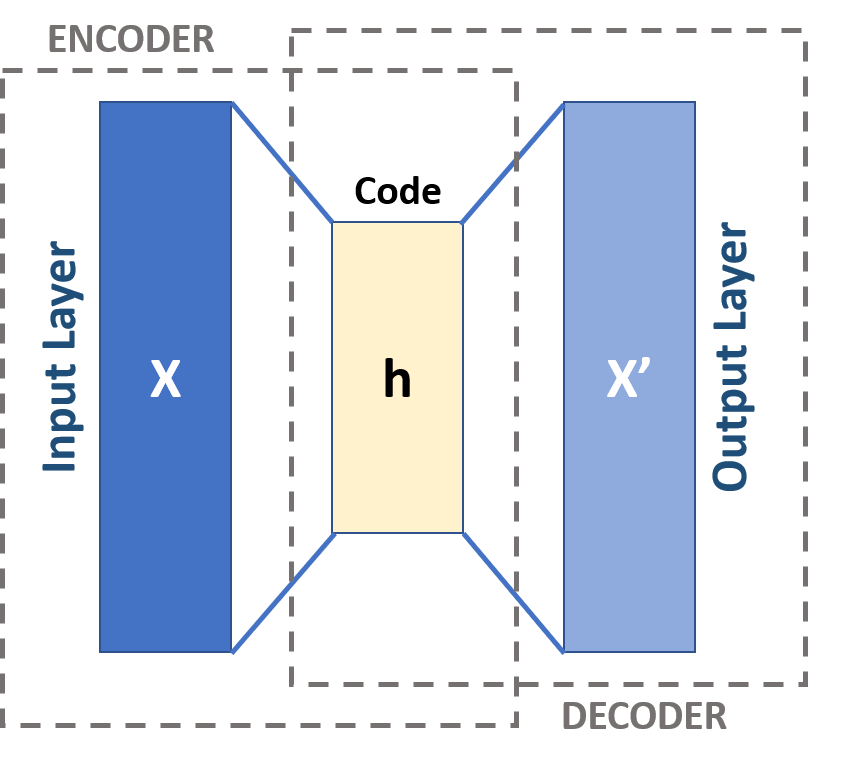

At its heart, an autoencoder consists of two main parts: an encoder and a decoder. The encoder maps the input data $x$ to a latent representation $z$ through a series of transformations, often non-linear, typically represented by $z = f(x)$. The decoder then attempts to reconstruct the original input $x'$ from the latent representation $z$, using a function $x' = g(z)$. The network is trained by minimizing a loss function, usually the mean squared error between the input $x$ and the reconstructed output $x'$, i.e., $L(x, x') = ||x - g(f(x))||^2$. This forces the latent representation $z$ to capture the most important information needed to reconstruct the input, thereby achieving dimensionality reduction or feature learning.

📊 Key Facts & Numbers

Models like TensorFlow and PyTorch facilitate the training of networks with over 100 layers. The computational cost can range from hours to days on GPU clusters for complex datasets.

👥 Key People & Organizations

Key figures in the development and popularization of autoencoders include Geoffrey Hinton. Yann LeCun's contributions to convolutional neural networks have also been instrumental in developing powerful encoder architectures for image data. Organizations like Google AI, Meta AI, and OpenAI have extensively researched and deployed autoencoder variants for tasks ranging from image compression to natural language processing. The International Conference on Machine Learning and Neural Information Processing Systems conferences are primary venues for presenting cutting-edge autoencoder research.

🌍 Cultural Impact & Influence

Autoencoders have profoundly influenced the fields of computer vision and natural language processing. Their ability to learn meaningful representations from unlabeled data has democratized access to powerful feature extraction techniques, reducing reliance on expensive manual labeling. The concept of learning compressed representations has permeated other areas, influencing data compression algorithms and feature engineering practices. Generative variants, like variational autoencoders, have become foundational to modern generative AI systems, enabling the creation of realistic images, text, and audio, impacting fields from art and design to entertainment and scientific simulation.

⚡ Current State & Latest Developments

Current research in autoencoders is heavily focused on improving their generative capabilities and robustness. Variational autoencoders (VAEs) continue to be refined for higher fidelity image generation, with recent advancements pushing towards photorealistic outputs. Diffusion models, while distinct, share conceptual similarities in learning data distributions and are often compared to or combined with autoencoder principles. Efforts are also underway to enhance the interpretability of latent spaces learned by autoencoders, making them more transparent for scientific discovery. The integration of autoencoders into larger transformer architectures for sequence modeling is another active area, aiming to leverage their compression capabilities within complex NLP tasks.

🤔 Controversies & Debates

A significant debate surrounds the true 'understanding' autoencoders possess. Critics argue that they primarily learn statistical correlations rather than causal relationships, leading to potential failures in out-of-distribution scenarios. The choice of latent space dimensionality remains a point of contention: too low risks losing critical information, while too high negates the benefits of compression. Furthermore, the potential for autoencoders to generate convincing but fabricated data raises ethical concerns regarding misinformation and deepfakes, a debate amplified by the rise of GANs and other generative models. The energy consumption for training massive autoencoder models also presents an environmental challenge.

🔮 Future Outlook & Predictions

The future of autoencoders likely lies in their continued integration into hybrid models and their application to increasingly complex data modalities. Expect to see autoencoders playing a crucial role in multimodal learning, where they can compress and align information from diverse sources like text, images, and audio. Their application in scientific research, particularly in fields like drug discovery and materials science, is poised for significant growth, enabling the identification of novel patterns in high-dimensional experimental data. Advances in unsupervised and self-supervised learning will further enhance their ability to learn from vast, unlabeled datasets, potentially leading to more general-purpose AI systems.

💡 Practical Applications

Autoencoders find practical application across a wide spectrum of industries. In finance, they are used for fraud detection by identifying anomalous transaction patterns. In healthcare, they aid in medical image analysis, such as detecting tumors or anomalies in X-rays and MRIs. Cybersecurity firms employ them for network intrusion detection, spotting unusual traffic patterns. The entertainment industry uses them for image and video compression, as well as for generating special effects. In robotics, they can help robots learn efficient representations of their environment for navigation and manipulation tasks, often in conjunction with reinforcement learning algorithms.

Key Facts

- Category

- technology

- Type

- technology